Tutorial: Better Retrieval with Embedding Retrieval

Last Updated: November 24, 2022

Importance of Retrievers

The Retriever has a huge impact on the performance of our overall search pipeline.

Different types of Retrievers

Sparse

Family of algorithms based on counting the occurrences of words (bag-of-words) resulting in very sparse vectors with length = vocab size.

Examples: BM25, TF-IDF

Pros: Simple, fast, well explainable

Cons: Relies on exact keyword matches between query and text

Dense

These retrievers use neural network models to create “dense” embedding vectors. Within this family, there are two different approaches:

a) Single encoder: Use a single model to embed both the query and the passage. b) Dual-encoder: Use two models, one to embed the query and one to embed the passage.

Examples: REALM, DPR, Sentence-Transformers

Pros: Captures semantic similarity instead of “word matches” (for example, synonyms, related topics).

Cons: Computationally more heavy to use, initial training of the model (though this is less of an issue nowadays as many pre-trained models are available and most of the time, it’s not needed to train the model).

Embedding Retrieval

In this Tutorial, we use an EmbeddingRetriever with

Sentence Transformers models.

These models are trained to embed similar sentences close to each other in a shared embedding space.

Some models have been fine-tuned on massive Information Retrieval data and can be used to retrieve documents based on a short query (for example, multi-qa-mpnet-base-dot-v1). There are others that are more suited to semantic similarity tasks where you are trying to find the most similar documents to a given document (for example, all-mpnet-base-v2). There are even models that are multilingual (for example, paraphrase-multilingual-mpnet-base-v2). For a good overview of different models with their evaluation metrics, see the

Pretrained Models in the Sentence Transformers documentation.

Use this link to open the notebook in Google Colab.

Prepare the Environment

Colab: Enable the GPU Runtime

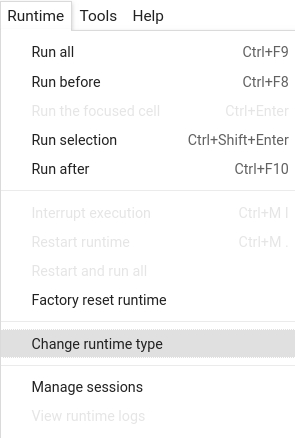

Make sure you enable the GPU runtime to experience decent speed in this tutorial. Runtime -> Change Runtime type -> Hardware accelerator -> GPU

You can double check whether the GPU runtime is enabled with the following command:

%%bash

nvidia-smi

To start, install the latest release of Haystack with pip:

%%bash

pip install --upgrade pip

pip install git+https://github.com/deepset-ai/haystack.git#egg=farm-haystack[colab,faiss]

Logging

We configure how logging messages should be displayed and which log level should be used before importing Haystack. Example log message: INFO - haystack.utils.preprocessing - Converting data/tutorial1/218_Olenna_Tyrell.txt Default log level in basicConfig is WARNING so the explicit parameter is not necessary but can be changed easily:

import logging

logging.basicConfig(format="%(levelname)s - %(name)s - %(message)s", level=logging.WARNING)

logging.getLogger("haystack").setLevel(logging.INFO)

Document Store

Option 1: FAISS

FAISS is a library for efficient similarity search on a cluster of dense vectors.

The FAISSDocumentStore uses a SQL(SQLite in-memory be default) database under-the-hood

to store the document text and other meta data. The vector embeddings of the text are

indexed on a FAISS Index that later is queried for searching answers.

The default flavour of FAISSDocumentStore is “Flat” but can also be set to “HNSW” for

faster search at the expense of some accuracy. Just set the faiss_index_factor_str argument in the constructor.

For more info on which suits your use case:

https://github.com/facebookresearch/faiss/wiki/Guidelines-to-choose-an-index

from haystack.document_stores import FAISSDocumentStore

document_store = FAISSDocumentStore(faiss_index_factory_str="Flat")

Option 2: Milvus

Milvus is an open source database library that is also optimized for vector similarity searches like FAISS. Like FAISS it has both a “Flat” and “HNSW” mode but it outperforms FAISS when it comes to dynamic data management. It does require a little more setup, however, as it is run through Docker and requires the setup of some config files. See their docs for more details.

# Milvus cannot be run on COlab, so this cell is commented out.

# To run Milvus you need Docker (versions below 2.0.0) or a docker-compose (versions >= 2.0.0), neither of which is available on Colab.

# See Milvus' documentation for more details: https://milvus.io/docs/install_standalone-docker.md

# !pip install git+https://github.com/deepset-ai/haystack.git#egg=farm-haystack[milvus]

# from haystack.utils import launch_milvus

# from haystack.document_stores import MilvusDocumentStore

# launch_milvus()

# document_store = MilvusDocumentStore()

Cleaning & indexing documents

Similarly to the previous tutorials, we download, convert and index some Game of Thrones articles to our DocumentStore

from haystack.utils import clean_wiki_text, convert_files_to_docs, fetch_archive_from_http

# Let's first get some files that we want to use

doc_dir = "data/tutorial6"

s3_url = "https://s3.eu-central-1.amazonaws.com/deepset.ai-farm-qa/datasets/documents/wiki_gameofthrones_txt6.zip"

fetch_archive_from_http(url=s3_url, output_dir=doc_dir)

# Convert files to dicts

docs = convert_files_to_docs(dir_path=doc_dir, clean_func=clean_wiki_text, split_paragraphs=True)

# Now, let's write the dicts containing documents to our DB.

document_store.write_documents(docs)

Initialize Retriever, Reader & Pipeline

Retriever

Here: We use an EmbeddingRetriever.

Alternatives:

BM25Retrieverwith custom queries (for example, boosting) and filtersDensePassageRetrieverwhich uses two encoder models, one to embed the query and one to embed the passage, and then compares the embedding for retrievalTfidfRetrieverin combination with a SQL or InMemory Document store for simple prototyping and debugging

from haystack.nodes import EmbeddingRetriever

retriever = EmbeddingRetriever(

document_store=document_store,

embedding_model="sentence-transformers/multi-qa-mpnet-base-dot-v1",

model_format="sentence_transformers",

)

# Important:

# Now that we initialized the Retriever, we need to call update_embeddings() to iterate over all

# previously indexed documents and update their embedding representation.

# While this can be a time consuming operation (depending on the corpus size), it only needs to be done once.

# At query time, we only need to embed the query and compare it to the existing document embeddings, which is very fast.

document_store.update_embeddings(retriever)

Reader

Similar to previous Tutorials we now initalize our reader.

Here we use a FARMReader with the deepset/roberta-base-squad2 model (see: https://huggingface.co/deepset/roberta-base-squad2)

FARMReader

from haystack.nodes import FARMReader

# Load a local model or any of the QA models on

# Hugging Face's model hub (https://huggingface.co/models)

reader = FARMReader(model_name_or_path="deepset/roberta-base-squad2", use_gpu=True)

Pipeline

With a Haystack Pipeline you can stick together your building blocks to a search pipeline.

Under the hood, Pipelines are Directed Acyclic Graphs (DAGs) that you can easily customize for your own use cases.

To speed things up, Haystack also comes with a few predefined Pipelines. One of them is the ExtractiveQAPipeline that combines a retriever and a reader to answer our questions.

You can learn more about Pipelines in the

docs.

from haystack.pipelines import ExtractiveQAPipeline

pipe = ExtractiveQAPipeline(reader, retriever)

Voilà! Ask a question!

# You can configure how many candidates the reader and retriever shall return

# The higher top_k for retriever, the better (but also the slower) your answers.

prediction = pipe.run(

query="Who created the Dothraki vocabulary?", params={"Retriever": {"top_k": 10}, "Reader": {"top_k": 5}}

)

from haystack.utils import print_answers

print_answers(prediction, details="minimum")

About us

This Haystack notebook was made with love by deepset in Berlin, Germany

We bring NLP to the industry via open source!

Our focus: Industry specific language models & large scale QA systems.

Some of our other work:

Get in touch: Twitter | LinkedIn | Discord | GitHub Discussions | Website

By the way: we’re hiring!